Xinhao Fan

PhD Candidate of Neuroscience

Johns Hopkins University

Biography

My PhD work investigated theoretical questions in neuroplasticity, synergistic information, and computational behavioral modeling. Building on this exploratory foundation, I aim to develop principled mathematical theories of neural representation, with the long-term vision of linking neural population activity to structured subjective experience.

Download my CV.

- Theoretical/Computational Neuroscience

- Physics of Brain/AI

Current Graduate Student in Neuroscience

Johns Hopkins University

BS in Physics, 2020

Nankai University

Recent Publications

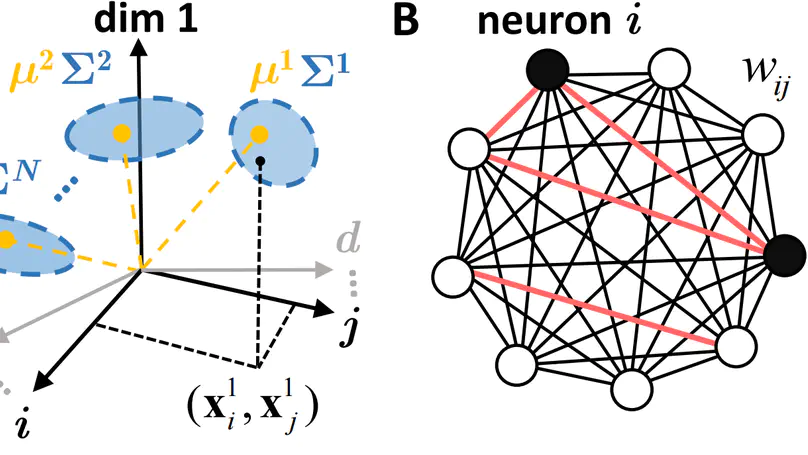

A cornerstone of our understanding of both biological and artificial neural networks is that they store information in the strengths of synaptic connections among the neurons. However, in contrast to the well-established theory for quantifying information encoded by the firing activity of neural networks, there does not exist a framework for quantifying information stored in the network’s connection distribution itself. Here, we develop a theoretical framework for synaptic information by using densely connected Hebbian networks performing autoassociative memory tasks and by modeling data patterns to be stored as log-normal distributions. Specifically, we derive analytical approximations for Shannon mutual information between the data and singletons, pairs, and arbitrary n-tuples of synaptic connections within the network. Our framework corroborates well-established insights regarding pattern storage capacity, supports the principle of distributed coding in neural firing activities, and formalizes the heterogeneity inherent in information encoding across synapses in a network. Notably, it discovers synergistic interactions among synapses, revealing that the information encoded jointly by all the synapses exceeds the ‘sum of its parts’. Taken together, this study introduces a powerful, interpretable framework for quantitatively understanding information storage in the synapses of neural networks, one that illustrates the duality of synaptic connectivity and neural population activity in learning and memory.

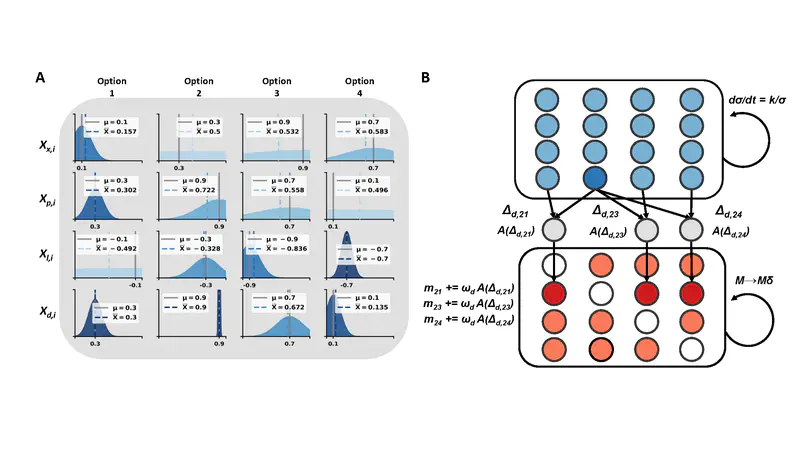

Most decisions we make in daily life require to take into account a large number of different factors. It is generally believed that we select some of them, use only those for the decision process, and disregard the others. In many experimental studies of decision making, this process is dramatically simplified and only very few factors are included. One typical paradigm is choosing between two alternatives (gambles) where each of which allows to win a certain amount with a certain probability. In this study, we juxtapose such a simple situation with a more complex one, with more alternatives and more factors. We also measure which of these factors the decision makers actually are interested in (i.e. which they are looking at). We then implement a dozen computational models, each designed to predict the decision a human makes based on which factors each individual looked at. We find two models that predict the choices significantly better than all others. The two models have in common that they, first, use explicitly the order in which they collect the information about the different choices and second, keep this information in memory.

Research Experience

- Data analysis and model development on human decision-making in complex tasks. Several parameter-efficient models were built which reached high performance on predicting participants’ choices.

- Simulating neural spiking and finding correlations between criticality in brain and different consciousness states of coma.

- Analyzing visual system model with information theory. Different learning pace were found for different parts of the model, which could help further understand and improve existing algorithms.

- Exploration on solving k-satisfiability problem with reinforcement learning. Reproduced AlphaGoZero on a small scale.

- Several learning oriented projects about restricted Boltzmann machines and Bayesian inference.

Contact

- 3400 North Charles Street, Baltimore, MD 21218